The Colors of Cities

February 21 2022

Making art from OpenStreetMap and Google Street View to represent the colors of cities

A friend visited me a while back in San Francisco, and made a comment about how much more pastel the city felt versus the green of Portland. This got me thinking, and I decided to hack together a little art project to represent the colors of the three cities I’ve lived in.

My idea was to collect points of interest within each city, and use the corresponding Google Street View images at each point to somehow decide on the color(s) of each city. While not particularly novel, I decided on a Voronoi diagram of the street view images. Each cell was colored based on the mean color of the horizontal street view images (i.e. no ground or sky).

Source code to this project is on GitHub.

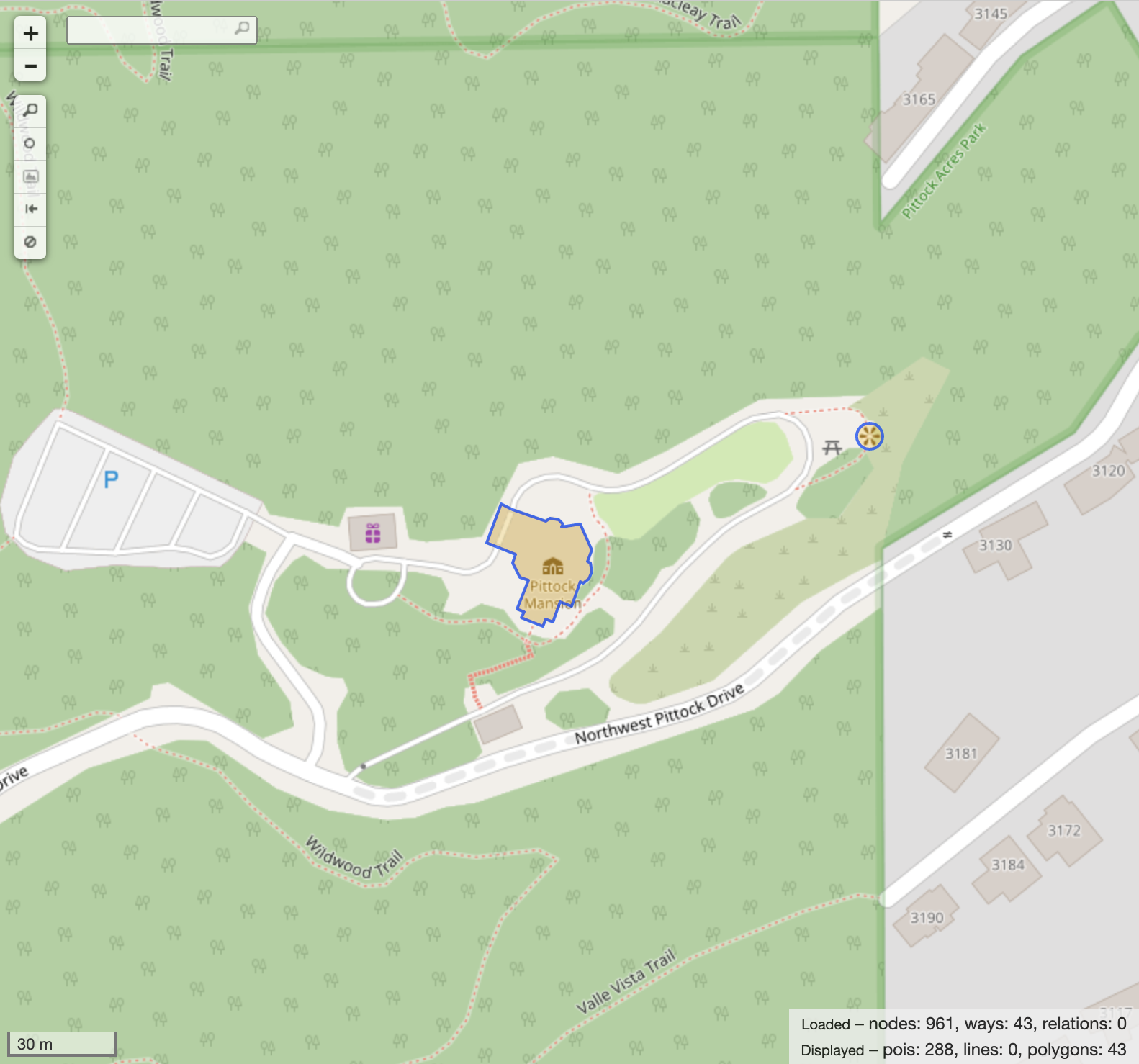

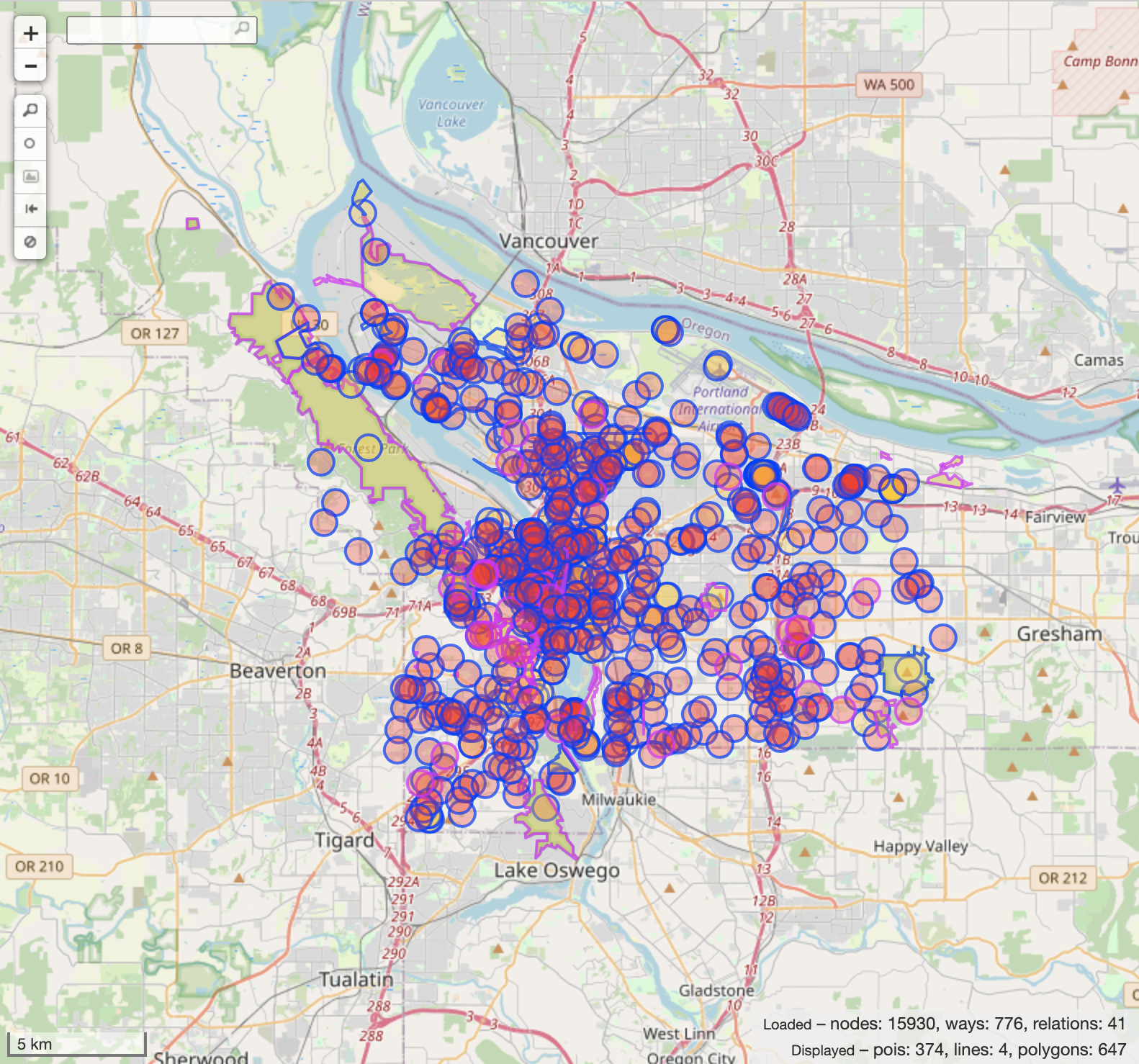

1. Collecting Points of Interest

The first step was to collect all points of interest within the cities. I did this by querying OpenStreetMap (OSM) for elements I deemed interesting.

I’ll only give the minimum overview of OSM concepts needed here, so see the OpenStreetMap Wiki for more information.

OpenStreetMap

Elements

Elements are the building blocks of OSM, representing everything in the real world. There are three types:

- Nodes: A specific point on the Earth.

- Ways: A list of connected nodes. I only use solid polygon areas.

- Relations: A relationship between elements. I only use sets of polygons, known as multipolygons, for areas with holes.

Keys

OpenStreetMap elements are described by their tags. By selecting a couple of tags I consider to represent points of interest, I can query OSM for all matching nodes, ways and relations.

Overpass

In order to access OpenStreetMap data programmatically, I relied on the Overpass API. I built all of my queries in Overpass Turbo, an interactive web GUI for Overpass.

While the API is simple enough, I used overpy to handle setup and to convert returned queries to Python types.

This made processing the points of interest easier and more pleasant.

I should mention that since this is a community-run project, it’s polite to not overload the API endpoints.

Overpass QL

For a much more detailed overview of the Overpass Query Language, see the link to the Overpass API wiki page above. My aim here was to do things as simply as possible, not as best possible (by whatever metric that may be).

The basic structure of a query statement is as follows:

element_type[tag_filter](area_filter);

For example:

node[tourism~"gallery|museum"](area:3600186579);

selects all galleries and museums in Portland.

The area filter is an Overpass specific value, which filters elements based on batch-processed area bounds for OpenStreetMap.

The leading 36 indicates the area is a relation, while the remaining digits form the ID 00186579.

I found it easiest to just manually grab these.

Each statement must be printed using out:

node[tourism~"gallery|museum"](area:3600186579);

out;

This only applies to the most recent query statement.

Multiple statements can be unioned together via ():

(

node[tourism~"gallery|museum"](area:3600186579);

node[leisure~"garden"](area:3600186579);

);

out;

When selecting ways or relations, you must also recursively select all of their children via >:

(

node[leisure~"garden"](area:3600186579);

way[leisure~"garden"](area:3600186579);

relation[leisure~"garden"](area:3600186579);

);

(._;>;);

out;

._ reselects (.) the previous union output (_).

> recurses down, selecting all the descendants of the previous statement’s selections.

This is necessary because querying for a relation or way alone will not return their constituent nodes, which define their shapes and locations.

In order to get JSON (rather than XML) back from Overpass, precede your query with [out:json]:

[out:json];

(

node[leisure~"garden"](area:3600186579);

way[leisure~"garden"](area:3600186579);

relation[leisure~"garden"](area:3600186579);

);

(._;>;);

out;

Selecting Points of Interest

I played around with filtering on many different tags, and eventually settled on the following:

tourism: things interesting to touristsaquariumartworkattractiongallerymuseumviewpoint

leisure: things for peoples’ spare timegardennature_reserveparkstadium

My goal here was to get a wide variety of outdoor points of interest, with even distribution and good density. To convert relations and ways to single locations for use with Street View, I opted to just average the latitudes and longitudes of their nodes as not all have latitudes and longitudes themselves.

2. Collecting Street View Images

I tried and failed to find a decent open alternative to Google Street View, but they offer a monthly credit that was more than enough for this project. I ended up using the Street View Static API, which provides two queries: one for metadata (free) and one for panoramas (paid). A full run collecting panoramas for all points of interest across the three cities I chose ran about $70 (before credits).

The first step was to query the Street View Metadata API for the closest Street View panorama, if one exists.

This returns a pano ID corresponding to a specific panorama in Street View, as well as the latitude and longitude the panorama was taken at.

As both Google and (OpenStreetMap)[https://osmdata.openstreetmap.de/info/projections.html] use WGS 84, no conversion of coordinates was needed.

Very large ways and relations often failed to find a corresponding Street View. I chalk this up to two main factors: the average of their child nodes being far from where people might take Street View photos, and large ways and relations (here) often corresponding to large, inaccessible areas.

Next, I used this ID to get the images making up the panorama. Street View represents 3D views via Cube Mapping. I opted to only use the 4 lateral images, as the sky and ground were very often not very different across panoramas nor interesting.

In retrospect, I found out I could have filtered out indoor panoramas. I think this would have helped remove some of the weirdly colored regions, but I’m too lazy to do this over since I’ve already deleted my API keys.

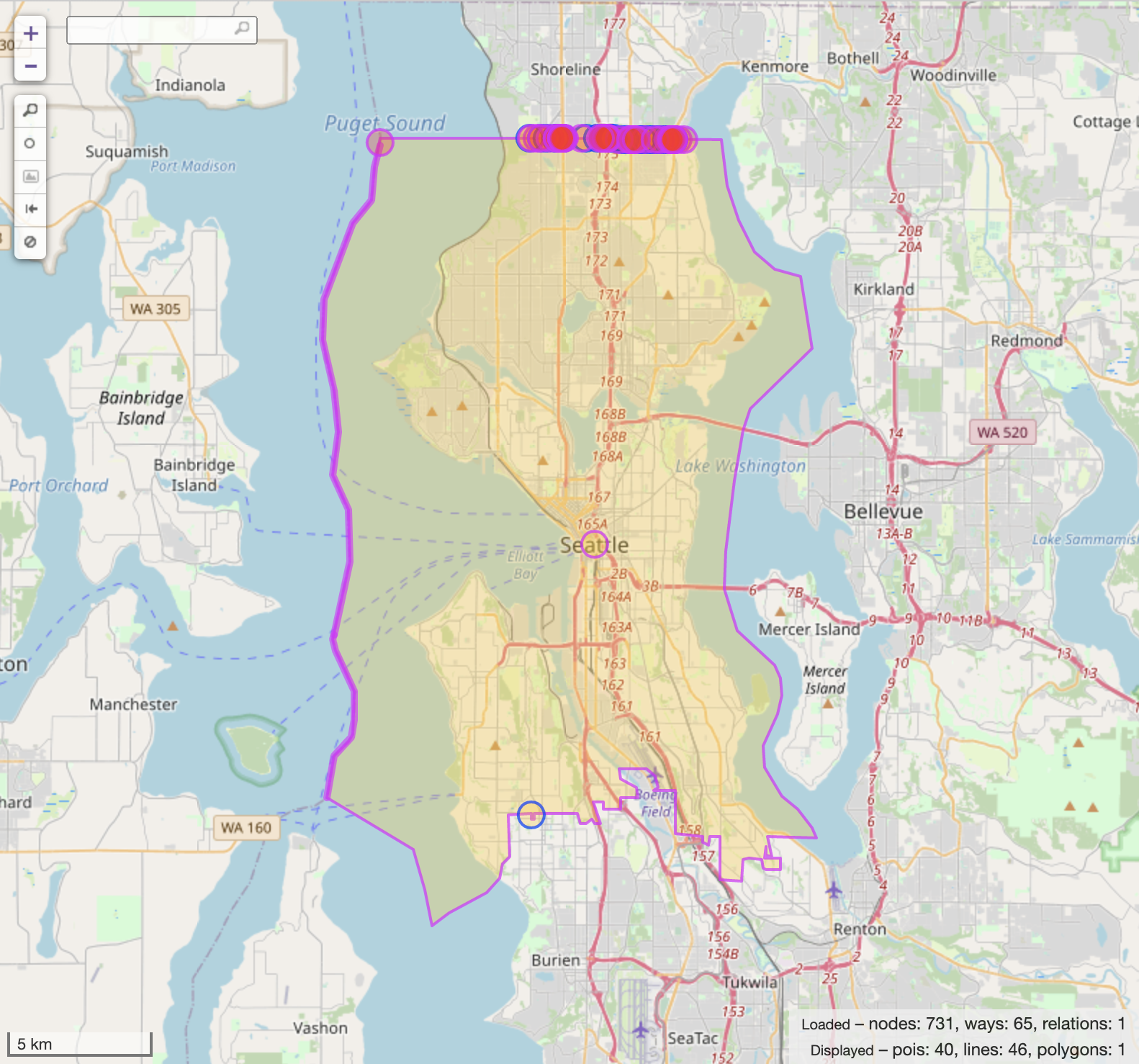

3. Making the Artwork

To make the artwork, I needed two pieces. First, the bounds of the city that would crop the Voronoi diagram. And second, the points and corresponding colors that would generate the diagram.

I grabbed the geometries of the cities’ boundaries via osmnx. I don’t know if there was a “more proper” way to do this, but it was easy and worked.

All of the geometry handling came from Shapely. This includes polygon & multipolygon classes, geometric union/difference, affine transformations and optimized data structures for finding intersections with massive geometries.

Removing Bodies of Water

The first step was to intersect the city geometry with OSM’s land polygons. OpenStreetMap provides pre-made land polygons for download, encompassing all landmasses on Earth. This worked really well to cut city boundaries down to the coastline, but this didn’t handle inland bodies of water like lakes or rivers.

To gather non-coastline water, I queried OSM for the “natural” key with the following values:

coastlinestraitbaycanalwater

By calculating the difference of the previously intersected city bounds and the geometries for the queried features, we are left with a perfect outline of the cities’ land.

Converting Coordinates

Standard web maps use Mercator, but so far all of the coordinates have been in WGS 84. GeoPandas provides functions for converting between coordinate systems.

Collecting Points & Colors

This was super straightforward: for each panorama, I averaged the color of all pixels in the middle 50% horizontally. These colors were then paired with the latitude and longitude of the panorama and fed into the library to make the Voronoi diagram.

view.py on GitHub shows some of the exploration I did in trying—and failing—to come up with a better way to color Voronoi regions than a boring old average color.

Making the Voronoi Diagram

geovoronoi was the backbone of the art project. It takes in the processed points and trimmed city bounds above, and returns the geometries of the Voronoi diagram fit to the city.

At this point we are almost done. All that is left is to do some minor transformations of the geometries and write them out to an SVG. Luckily, Shapely has a nice helper method to convert geometries to SVG paths.

Results

So how did it turn out? I think the answer is okay. The graphics are pretty neat, but I definitely see lots of areas for improvement if only I had infinite time.